20 Azure ML Architectures That Can Save Your Company Millions - And Most Engineers Have Never Heard of Them

Here's a number that should make every CTO lose sleep:

Only 54% of AI projects ever make it to production.

The rest? They die in notebooks. They rot on a data scientist's laptop. They get killed by a cloud bill that looks like a small mortgage.

And here's the kicker - of the ones that do make it, nearly half silently degrade within months because nobody's watching them.

I recently went deep into a production-ready Azure ML Architecture Library - a meticulously crafted collection of 20 battle-tested architectural blueprints that covers everything from sub-100ms fraud detection to privacy-preserving federated learning across hospitals. What I found inside changed the way I think about deploying machine learning at scale.

This isn't theory. This is the stuff that separates the teams shipping ML at Fortune 500 speed from the ones still arguing about which framework to use.

Let's dive in.

The Problem

Let me paint a picture you've probably lived through.

Your team just trained a model. The AUC is 0.94. Everyone high-fives. You push it to an Azure endpoint, wire up a REST API, and call it a day.

Three weeks later:

- 📉 The fraud detection rate starts dropping - but nobody notices because there are no drift alerts.

- 💸 The cloud bill spikes because you left GPU clusters running 24/7 "just in case."

- 🎰 A new model version gets deployed straight to production with zero testing, and suddenly 3% of legitimate transactions get blocked.

- 🔒 A compliance audit asks "which dataset trained this model?" and your team scrambles through Slack messages to find out.

Sound familiar?

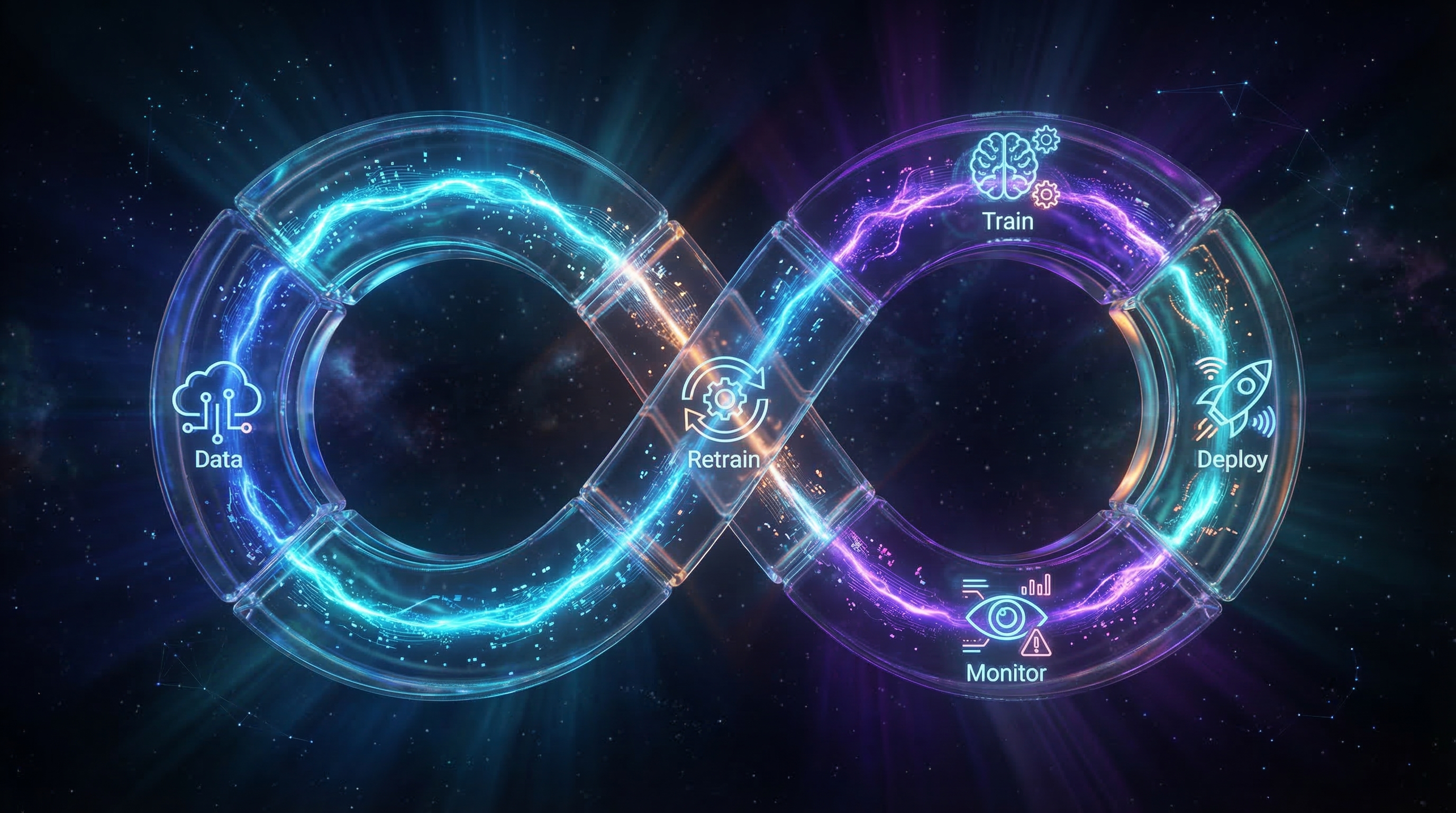

Here's the uncomfortable truth: building the model is only 20% of the work. The other 80% is the architecture around it - the deployment, the monitoring, the governance, the cost optimization, the disaster recovery.

And most teams skip that 80% entirely.

⚡ Pro Insight

According to recent industry data, enterprises spend an average of 18 months to fully operationalize a single ML model. Those with mature MLOps practices deploy 3–5x faster and see 50–70% fewer model failures.

Why This Matters

We're in a new era:

- The MLOps market has crossed $1.8 billion and is growing at 41% CAGR

- 60% of enterprise AI budgets now go toward productionizing existing models, not building new ones

- 42% of companies have abandoned most of their AI initiatives because they couldn't operationalize them

- Over 65% of Fortune 500 companies use Azure OpenAI - and they all need the infrastructure to support it

The message is clear: the competitive advantage isn't in the model anymore - it's in the system around the model.

And that's exactly what this architecture library solves.

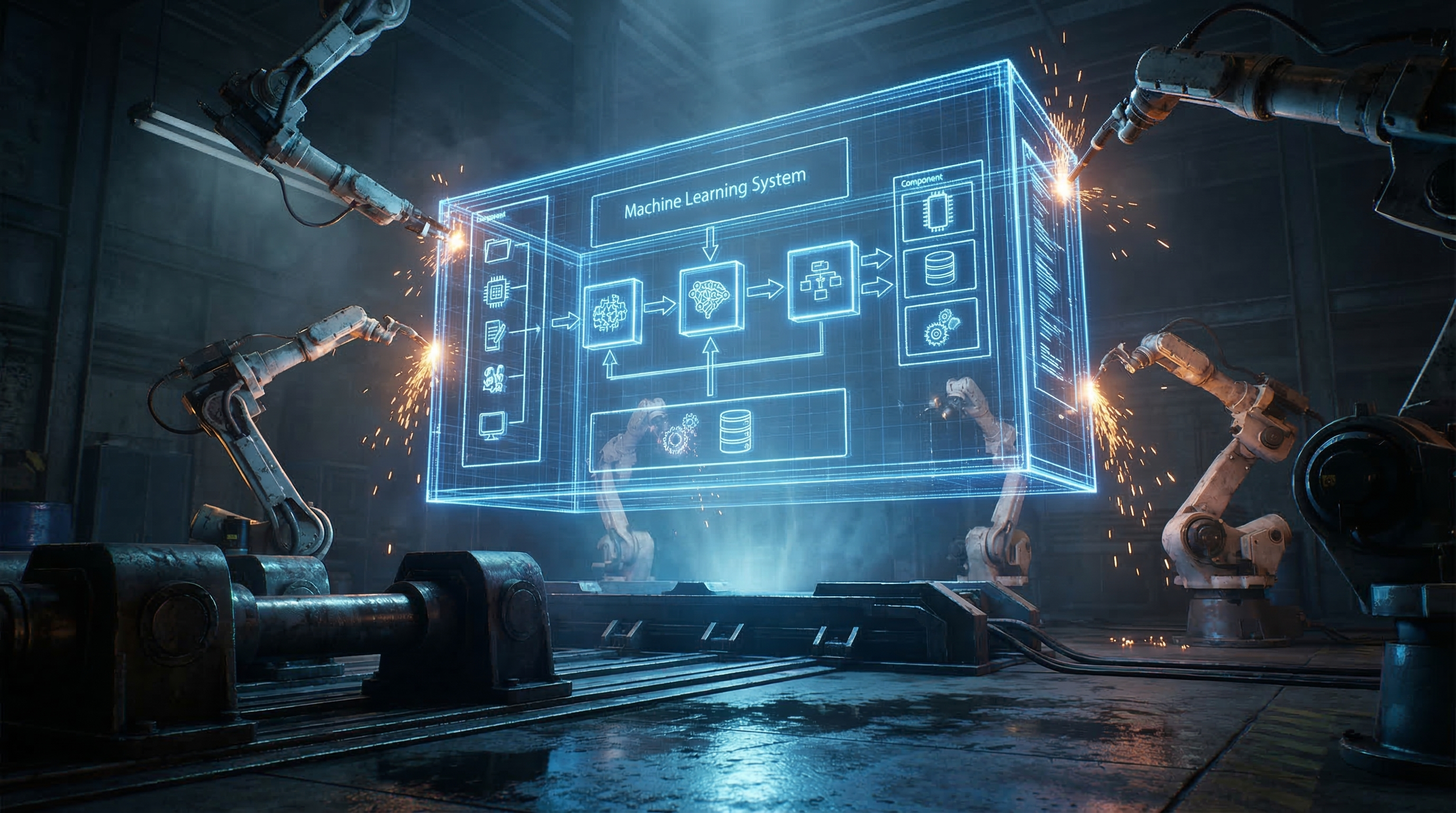

Deep Dive: The Architecture Playbook Nobody Talks About

The library I analyzed contains 20 complete architecture patterns, each one a deep-dive document covering:

- Full ASCII architecture diagrams

- Azure Well-Architected Framework alignment (all 5 pillars)

- Detailed security design with network topology

- Cost optimization strategies with actual dollar estimates

- Failure modes with probability ratings and mitigations

- Complete CI/CD pipeline YAML

- Terraform infrastructure-as-code templates

- Industry-specific compliance guidance (HIPAA, GDPR, PCI-DSS, FedRAMP)

These aren't blog-post-level overviews. Each architecture averages 20,000–55,000 bytes of pure technical depth. Combined, this library is essentially a 500+ page enterprise ML playbook — open, reusable, and mapped to real Azure services.

Let me walk you through the most mind-blowing patterns.

🏎️ Pattern 1: Sub-100ms Online Inference — Speed Is Software, Not Hardware

Most teams think fast inference means expensive GPUs. Wrong.

The Online Inference architecture achieves p99 latency of 50ms using Standard_DS3_v2 instances — that's a 4-vCPU CPU machine costing $0.19/hour. No GPU required.

How? Three tricks:

1. ONNX Runtime — Converting a scikit-learn or PyTorch model to ONNX gives you a 2x–10x inference speedup with zero code changes. The ONNX Runtime uses graph optimization, operator fusion, and hardware-specific acceleration under the hood.

2. Redis Caching — For endpoints where the same users make similar requests (think recommendation engines), caching predictions for 5 minutes can reduce inference calls by 30%.

3. Blue-Green Deployments — The architecture doesn't just deploy models — it deploys them to a "Green" slot with 0% traffic, runs smoke tests, then gradually shifts traffic: 10% → 25% → 50% → 100%. If error rate exceeds 1% or latency spikes above 100ms, it automatically rolls back.

# Canary rollout with automatic rollback

az ml online-endpoint update \

--name fraud-detection-endpoint \

--traffic green=10 blue=90

# Wait 30 minutes, check metrics, then...

az ml online-endpoint update \

--traffic green=100 blue=0The estimated monthly cost for a 3-instance baseline with 10-instance peak scaling? ~$671/month after optimizations. That's a production-grade, sub-100ms, auto-scaling, blue-green-deployed inference endpoint for less than a junior developer's daily rate.

⚡ Pro Insight

The architecture includes load testing benchmarks: 10 instances handle 5,000 RPS at 16ms p50 latency with 99.99% success rate. Know your numbers before production.

💰 Pattern 2: The 90% Cost Hack — FinOps for ML

Here's a question: are you paying hot-tier storage prices for training data you haven't touched since 2023?

The Cost-Optimized Architecture is essentially an entire architecture dedicated to not wasting money. And the savings are staggering:

| Strategy | Savings |

|---|---|

| Spot Instances for training | Up to 90% on GPU compute |

| Serverless Endpoints for dev/test | 100% savings when idle |

| Storage Lifecycle (Hot → Cool → Archive) | 40–90% on storage |

| Reserved Instances for steady-state inference | Up to 72% on 3-year commitment |

| Auto-shutdown on idle compute | Eliminates waste entirely |

The killer insight? Spot Instance + Checkpointing.

When you configure Azure ML Compute Clusters with "Low Priority" (Spot) nodes, Azure gives you the GPU at an 80–90% discount. The catch? Azure can reclaim that GPU at any time.

The architecture solves this with enforced checkpointing:

# Save checkpoint every epoch

if epoch % checkpoint_interval == 0:

torch.save({

'epoch': epoch,

'model_state_dict': model.state_dict(),

'optimizer_state_dict': optimizer.state_dict(),

'loss': loss,

}, f'outputs/checkpoint_epoch_{epoch}.pt')If Azure evicts your node, the job re-queues automatically. When a new Spot node becomes available, it resumes from the last checkpoint. You lose minutes of work, not hours. And you save thousands of dollars.

The architecture even includes Azure Policy enforcement:

- "Dev clusters MUST use Low Priority (Spot)"

- "All resources MUST have a CostCenter tag"

- "Alert the team if spend exceeds $1,000/month"

⚡ Pro Insight

Run a "Janitor Script" weekly to find and delete orphaned endpoints, untagged compute clusters, and unused container images in ACR. These silent cost leeches add up fast.

🛡️ Pattern 3: Federated Learning — Training on Data You Can Never See

This is where it gets sci-fi.

Imagine three competing hospitals that each have patient data for a rare disease. Individually, none of them has enough data to train a good model. Together, they could build something incredible. But HIPAA, GDPR, and basic competitive distrust mean the data can never leave their premises.

The Federated Learning architecture solves this elegantly:

- A central Aggregator (running inside an Azure Confidential Computing SGX enclave) initializes a global model

- The model gets sent to each hospital — not the data

- Each hospital trains the model locally on their private data

- Only the encrypted weight updates (gradients) are sent back — never the raw data

- The Aggregator averages the updates inside a Trusted Execution Environment (TEE)

- Repeat for N rounds until the model converges

The result? A model trained on all three hospitals' data — without any hospital ever seeing another's records. Even the Azure administrator running the SGX enclave cannot peek at the individual updates.

The architecture adds Differential Privacy on top: each client clips gradients and adds Gaussian noise before sending updates, mathematically guaranteeing that no individual patient record can be reconstructed from the model.

🌊 Pattern 4: Streaming ML — Predictions at the Speed of Events

Not every prediction can wait for a REST call. Some need to happen as the data flows.

The Streaming ML architecture is an event-driven beast that processes 10,000+ events per second and delivers predictions with sub-second latency:

IoT/Web/Mobile → Event Hubs (32 partitions) → Azure Functions → Feature Enrichment (Cosmos DB) → ML Endpoint → ActionsThe clever part is the dual-path processing:

- Stream Analytics handles complex event processing — tumbling windows, aggregations, joins, pattern matching

- Azure Functions handles simple event-to-prediction flows with parallel processing across 32 partitions

-- Stream Analytics: 5-minute fraud velocity check

SELECT user_id,

COUNT(*) as transaction_count,

AVG(transaction_amount) as avg_amount

FROM EventHub TIMESTAMP BY timestamp

GROUP BY user_id, TumblingWindow(minute, 5)

HAVING COUNT(*) > 10 -- Flag velocity anomaliesWhen the fraud probability exceeds 0.8? The system blocks the transaction in real-time, sends an SMS alert, and logs everything to Cosmos DB for the audit trail.

Monthly cost for 10,000 events/sec, 24/7? ~$1,970. That's real-time ML inference on a streaming pipeline for less than a single engineer's monthly cloud budget.

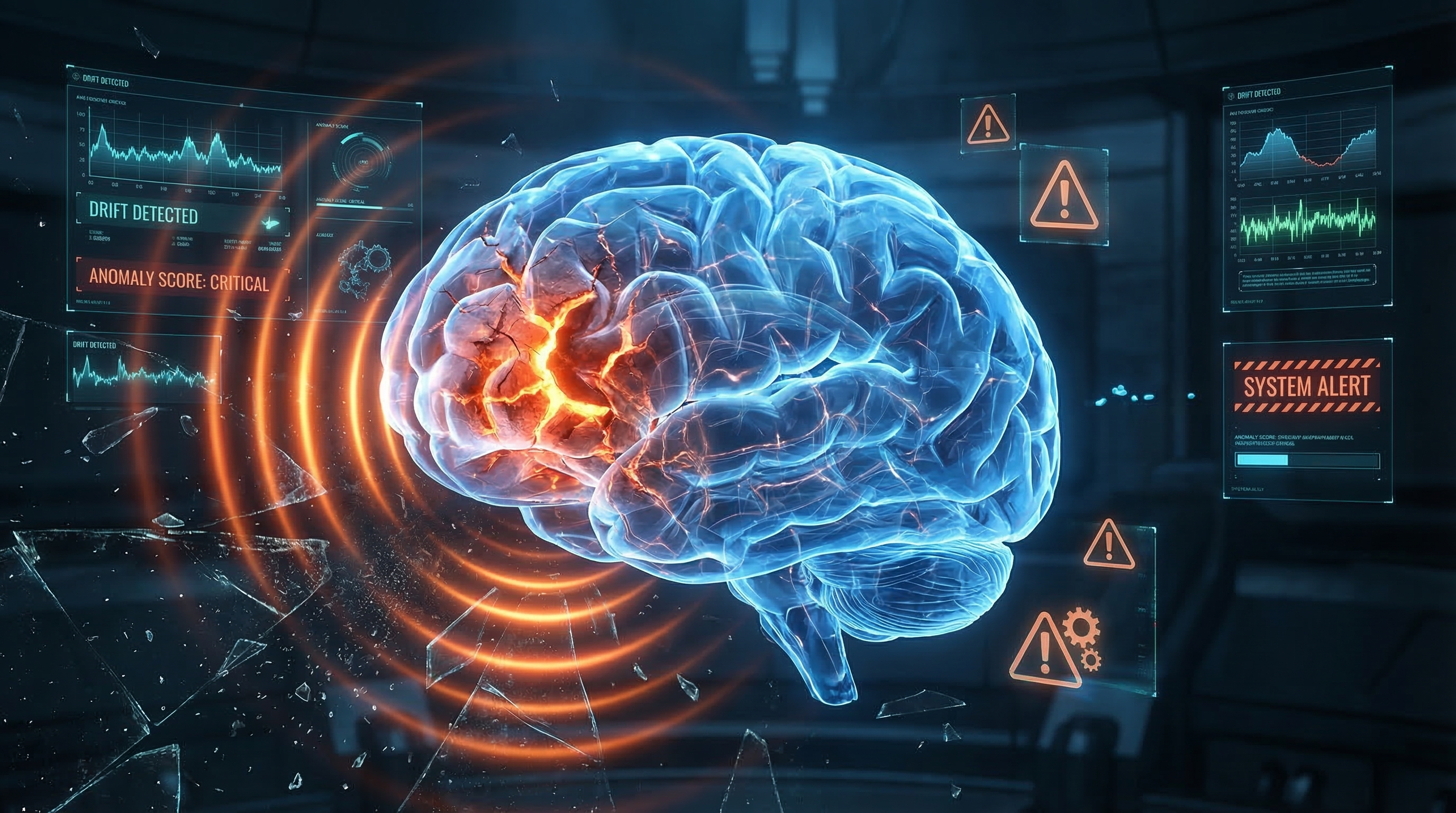

👁️ Pattern 5: The Silent Killer — Drift Detection

Here's the scariest failure mode in ML: your model is wrong, and nobody knows.

No errors. No crashes. No alerts. The model just quietly starts giving worse predictions because the real world changed and your training data didn't.

The Monitoring & Drift Detection architecture is a full observability layer that catches this:

- Data Drift: Compares production input distributions against training data baselines using Wasserstein distance and KS tests

- Prediction Drift: Monitors whether the distribution of model outputs has shifted

- Data Quality: Validates schemas, null rates, type mismatches, and range violations in real-time

- Business Impact: Tracks downstream metrics like fraud detection rate and false positive rate

The automation is the key: when drift score exceeds a threshold (e.g., 0.2), the system:

- Fires an alert to the data science team via PagerDuty/Teams

- Triggers a webhook that kicks off the MLOps retraining pipeline

- Creates a ticket in ServiceNow/Jira

- Logs the incident for compliance auditing

The architecture even includes a root cause analysis workflow: drill into the drift dashboard, identify which specific features drifted (e.g., "user income distribution shifted"), and determine whether it's a real-world trend or a broken upstream data pipeline.

Inside the Project: What Makes This Library Different

Having analyzed architectural documentation across many cloud providers, here's what makes this Azure ML library genuinely unique:

1. The "When NOT to Use" Discipline

Every single architecture includes an explicit "When NOT to Use" section. This is rare and incredibly valuable. Most reference architectures sell you on their approach. This one tells you when to walk away.

For example, the Online Inference architecture explicitly says: "Do NOT use if request volume is very low (<10 RPS) — use serverless options." That single sentence can save a team months of over-engineering.

2. Industry Mapping That Actually Maps

The industries.md file doesn't just list industries — it creates a decision matrix:

| Industry | Key Use Case | Architecture | Compliance |

|---|---|---|---|

| Financial Services | Fraud ring detection | Graph ML (#19) | PCI-DSS, SOX |

| Healthcare | Patient data training | Federated Learning (#15) | HIPAA, GDPR |

| Manufacturing | Factory floor inference | Edge (#08) | ISO 27001 |

| Retail | Real-time recommendations | Feature Store (#02) + Streaming (#07) | GDPR, CCPA |

This is the kind of document that should exist in every enterprise's ML team wiki — and almost never does.

3. Failure Mode Tables With Probability Ratings

Each architecture includes a failure mode analysis table with impact, probability, and mitigation columns. This is borrowed from traditional systems reliability engineering (SRE) and applied to ML.

For example, the Streaming ML architecture rates "Model Drift" as High probability and "Data quality issues leading to incorrect predictions" as High probability — both with specific mitigations. This transforms architecture documentation from "what to build" into "what will break and how to survive it."

4. Terraform + Diagrams + YAML — The Full Stack

Each architecture ships with a corresponding /terraform directory and /diagrams folder. This isn't documentation — it's a deployable blueprint.

Key Takeaways

If you take nothing else from this article, remember these five rules:

1. 🏎️ Speed is Software, Not Hardware

Convert to ONNX before buying GPUs. Cache predictions before adding replicas. Optimize your scoring script before scaling your cluster.

2. 💰 Never Pay Full Price for Training

Spot Instances + Checkpointing = 80–90% savings. If your training jobs aren't using Low Priority VMs, you're leaving money on the table.

3. 🚦 Zero-Downtime Deployment is Non-Negotiable

Blue-green with canary rollouts and automatic rollback. If your deployment strategy is "push and pray," you're one bad model away from a production incident.

4. 👁️ Monitor Your Models Like You Monitor Your Servers

Data drift, prediction drift, data quality, and business metrics — all automated, all alerting, all feeding back into a retraining loop.

5. 🔐 Privacy is an Architecture, Not a Checkbox

Federated Learning, Confidential Computing, Differential Privacy — these aren't academic concepts. They're production patterns being used by hospitals, banks, and government agencies today.

⚡ Pro Insight

The top 5 cross-industry architectures every ML team should implement first: MLOps CI/CD (#09), Cost Optimization (#17), Multi-Region DR (#18), Monitoring & Drift (#11), and Explainability (#12). These are foundational regardless of your use case.

Final Thoughts

Machine learning has left the lab. It's no longer a data scientist's side project running in a Jupyter notebook — it's a mission-critical production system that needs the same architectural rigor we give to databases, APIs, and infrastructure.

The teams that win won't be the ones with the best models. They'll be the ones with the best systems around their models — the auto-scaling, the drift detection, the cost governance, the failover, the compliance auditing, the canary deployments.

This architecture library exists because one team decided to codify everything they learned the hard way — across 20 patterns, 6 industry verticals, and hundreds of Azure services — into something reusable.

The blueprints are there. The Terraform is written. The failure modes are documented.

The only question is: will you architect your ML for production, or will you be part of the 46% that never makes it?

What's the biggest ML deployment disaster you've survived? Drop your war stories in the comments. I'll start: I once left a 4-node GPU cluster running for 3 weeks after a holiday break. That invoice still haunts me. 😅

If you found this useful, share it with your ML team. They'll thank you when the next cloud bill arrives.

Written with insights from the AWS ML Architecture Library, AWS Well-Architected Framework, and the latest industry research on MLOps, federated learning, and cloud-native ML infrastructure.

📌 Connect & Support

🐙 GitHub Repository: View Source Code + Terraform Templates

📧 Email: connect@jaydeepgohel.com — Let's connect and discuss cloud architecture

☕ Buy Me a Coffee: If you found this work valuable and want to support more content like this, buy me a coffee ☕

This article is with little help of AI

💬 Feedback: Share your thoughts in the comments below — What did you love? What can be improved? Your feedback helps me create better content! 👇

Comments

Post a Comment