20 Production-Grade ML Architectures for Enterprise Systems on Google Cloud

You're Probably Building ML Wrong - Here's the Blueprint Google Doesn't Hand You

There's a dirty secret in the machine learning world that nobody talks about at conferences.

~86% of ML projects never make it to production.

Let that sink in. Nearly 9 out of 10 models that data scientists painstakingly train, tune, and celebrate in Jupyter notebooks… die in the gap between "it works on my machine" and "it works for millions of users."

I've spent months dissecting a library of 20 end-to-end ML architectures purpose-built for Google Cloud Platform - complete with Terraform code, ASCII diagrams, compliance mappings, and production war stories. What I found wasn't just a collection of reference architectures. It was a masterclass in building ML systems that actually survive contact with reality.

Buckle up. This is the article I wish existed when I started my cloud ML journey.

The Problem: ML's "Valley of Death"

Here's a thought experiment. 🧠

Imagine you've trained a fraud detection model. It's beautiful. 99.2% accuracy on your test set. Your team pops champagne. Your VP sends a congratulatory Slack message.

Then reality hits.

- Who deploys it? Your data scientist doesn't know Kubernetes.

- Where does it run? A single VM that crashes on Black Friday.

- How fast is it? 800ms latency - users have already left.

- Is it compliant? Your CISO just learned you're storing PII in plain text.

- When does it break? Three months later, silently, because nobody's watching.

This isn't hypothetical. This is every company's ML story until they get serious about architecture.

⚡ Pro Insight

The MLOps market is projected to explode from $1.7B in 2024 to $39B by 2034 - a 37.4% CAGR. The companies investing in ML architecture now are the ones that will dominate their industries.

Why This Matters: The Architecture-First Revolution

The GCP ML Architecture Library isn't just documentation. It's a paradigm shift in how we think about production machine learning.

Here's what makes it different:

| Traditional Approach | Architecture-First Approach |

|---|---|

| Train model → figure out deployment later | Design the full lifecycle before writing a single line of code |

| "Works on my machine" | Reproducible across dev, staging, and prod |

| Manual deployment every quarter | CI/CD/CT - Continuous Everything |

| Hope the model doesn't break | Automated drift detection and self-healing |

| Compliance as an afterthought | Security and governance baked into every layer |

Each of the 20 architectures follows a rigorous 23-point structure - from intent and problem statement, through GCP Architecture Framework mapping, all the way to failure modes, testing strategies, and open-source alternatives.

And here's the kicker: every single one comes with production-ready Terraform code.

No more "exercise left to the reader." No more hand-wavy architecture diagrams. This is copy-paste-deploy infrastructure.

Deep Dive: The 20 Architectures That Cover Everything

Let me walk you through the highlights - grouped by what they solve. Think of these as building blocks you can mix and match to create your perfect ML platform.

🏗️ The Foundation Layer

Architecture 01: Data Lakehouse Ingestion & Governance

Every ML system lives or dies by its data. This architecture unifies the chaos.

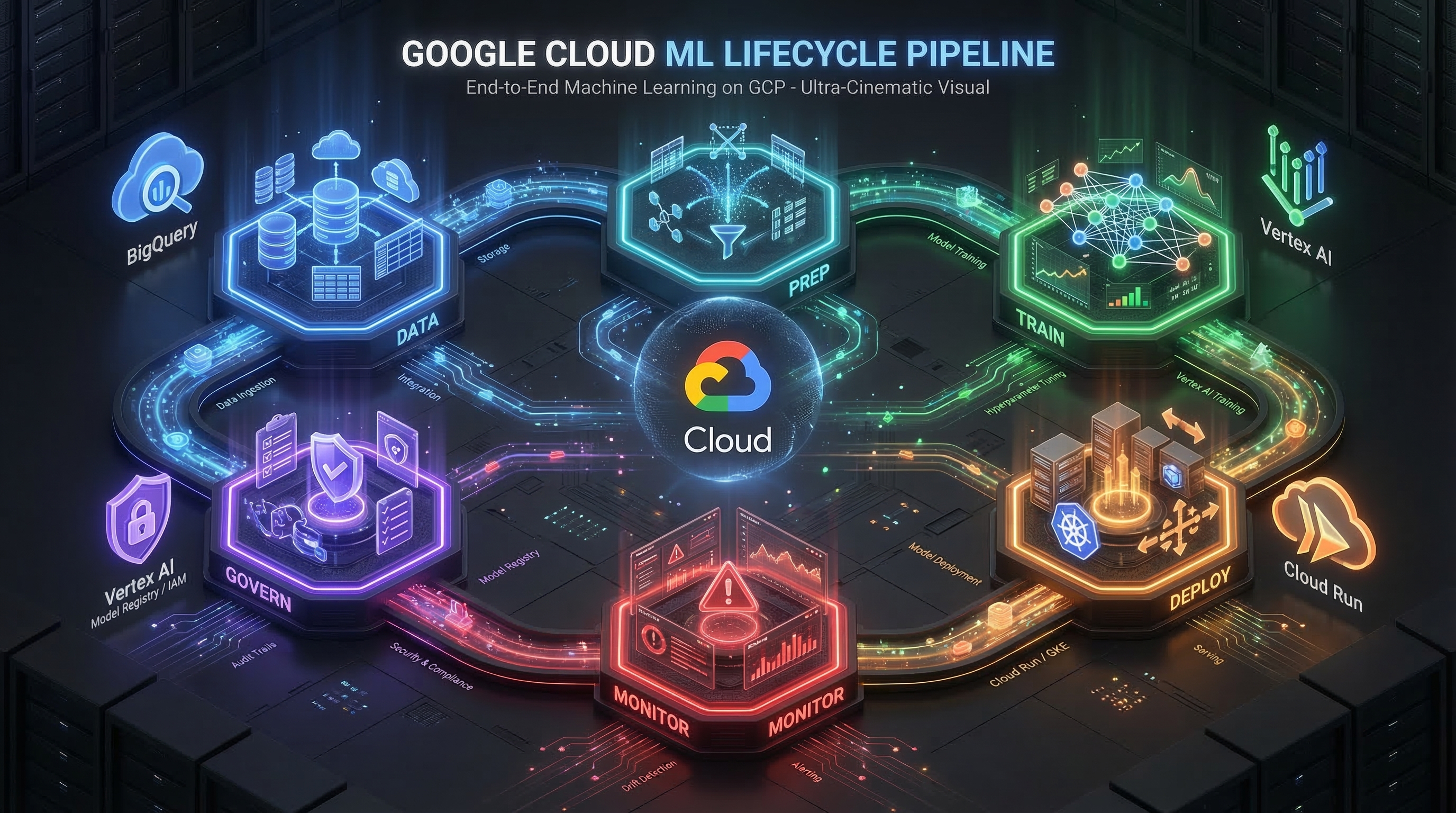

Source → GCS/Pub/Sub → Dataflow (Clean) → BigQuery (Store) → Vertex AIThe genius here? Dataflow handles both batch and streaming with a single SDK (Apache Beam). No more maintaining two separate pipelines. And Dataplex acts as your intelligent governance brain - automatically discovering metadata, enforcing quality rules, and managing access policies across your entire data estate.

💡 Hidden Gem: GCS and BigQuery offer 99.999999999% durability - that's 11 nines. Your data is literally more durable than the hard drive it was originally stored on.

Architecture 02: Feature Store & Serving

Here's where most ML teams go wrong. They compute features one way for training and a completely different way for inference. Result? Training-serving skew - the silent killer of model accuracy.

Vertex AI Feature Store solves this by being the single source of truth for features. Train on the same features you serve. Period.

⚡ The Training Powerhouse

Architecture 03 & 04: Batch + Distributed Training

Need to train a medical imaging model on millions of DICOM files? Architecture 04 gives you multi-node GPU training with automatic hyperparameter tuning via Vertex AI Vizier.

But here's what I love: the documentation doesn't just tell you how to distribute training - it tells you when NOT to:

❌ Don't use distributed training if your model fits on a single GPU. The communication overhead will make it slower, not faster.

This is the kind of battle-tested wisdom you only get from engineers who've been burned.

Architecture 16: AutoML & Experimentation

Not every problem needs a custom model. AutoML is your fastest path from "I have data" to "I have a model." Combined with Vertex AI Experiments and MLflow integration, you get full experiment tracking without the infrastructure headache.

🚀 The Serving Arsenal

This is where things get really interesting.

Architecture 05: Online Inference - Sub-50ms Predictions

Client → Global LB → Vertex AI Endpoint (Traffic Split) → Model → ResponseThe architecture serves predictions in under 50 milliseconds at P95. How?

- Optimized containers: TensorFlow Serving, TorchServe, or Triton - not your custom Flask app

- gRPC over REST: 2-3x higher throughput

- GPU acceleration: NVIDIA T4/A100 when you need it

- Traffic splitting: Deploy to 10% canary, watch metrics, then gradually roll to 100%

# Never deploy directly to 100%. Start with canary.

endpoint.deploy(

model=new_model,

traffic_percentage=10, # Canary: 10% traffic

machine_type="n1-standard-4",

accelerator_type="NVIDIA_TESLA_T4",

min_replica_count=1,

max_replica_count=10,

)⚡ Pro Insight

Setmin_replica_count > 0for production models. Cold starts can add seconds of latency that kill user experience.

Architecture 07: Streaming ML - Predictions in Real Time

When batch isn't fast enough, you need streaming. Pub/Sub + Dataflow + Vertex AI gives you real-time ML on streaming data - think sensor monitoring, clickstream analysis, and network optimization.

The key insight? Dataflow's Streaming Engine separates compute from state storage, so you can process millions of events per second without worrying about memory limits.

Architecture 08: Edge Inference - ML Without the Cloud

Sometimes the cloud is too far away. Literally.

For factory floors, autonomous vehicles, and IoT devices, this architecture deploys TensorFlow Lite models to Edge TPUs. The model runs on the device, with latency measured in microseconds, not milliseconds.

🛡️ The Governance & Trust Layer

This is what separates toy projects from enterprise ML. And honestly? This is where the library really shines.

Architecture 09: MLOps CI/CD - The Full Pipeline

Code → CI (Build/Test) → CT (Train/Register) → CD (Deploy Staging → Prod)Notice that middle step? CT - Continuous Training. This isn't just CI/CD for code. It's CI/CD for models. When new data arrives or code changes, the pipeline automatically:

- Retrains the model

- Evaluates it against the current production model

- Registers it if it's better

- Deploys it through staging → approval gate → production

All with Blue-Green deployments for zero-downtime rollouts and instant rollback.

⚡ Pro Insight

Only 32% of companies properly implement CI/CD for ML models. Getting this right puts you ahead of two-thirds of the industry.

Architecture 10 & 12: Model Registry + Explainability

In regulated industries (finance, healthcare, public sector), you can't just ship a model and hope for the best. You need:

- Full lineage: Which data trained which model, deployed by whom, approved by whom

- Explainability: Why did the model deny this loan? (Required by FCRA and GDPR Article 22)

- Bias detection: Is the model fair across demographic groups?

Vertex AI Explainable AI provides SHAP-based feature attributions out of the box. No custom implementation needed.

Architecture 11: Monitoring & Drift Detection - Your Model's Immune System

Here's a scary stat: production models silently degrade over time. COVID changed shopping patterns. New currencies emerge. User demographics shift.

This architecture uses Jensen-Shannon divergence to continuously compare your production data against your training baseline. When drift exceeds your threshold? Automatic alerts via Slack, PagerDuty, or email - plus a triggered retraining pipeline.

Serve → Log (10% sample) → Detect Drift (JS divergence) → Alert → Retrain → DeployThink of it as an immune system for your ML models. It detects infections (drift) before they become deadly (business impact).

🔮 The Cutting Edge

These architectures are where the future lives.

Architecture 15: Federated Learning - Train Without Seeing the Data

This one blew my mind. 🤯

Imagine training a fraud detection model across 10 banks - without any bank sharing its customer data. Here's how:

- Central server distributes a global model to participating devices/organizations

- Each participant trains locally on their own data

- They upload only encrypted gradient updates (not data!)

- A Trusted Execution Environment aggregates gradients with differential privacy noise

- The global model improves - without any raw data ever being centralized

Distribute Model → Local Training → Secure Aggregation (TEE) → Update Global ModelWith a privacy budget of ε=1.0, even the central server can't reverse-engineer individual data points from the aggregated updates. This isn't just clever engineering - it's a regulatory requirement enabler for GDPR, HIPAA, and cross-border compliance.

Architecture 19: Graph ML - When Relationships Are the Feature

Traditional ML treats every row as independent. But what if the connections between data points are the most important signal?

Graph Neural Networks on Vertex AI + BigQuery Graph let you:

- Detect fraud rings by analyzing transaction networks

- Discover drug interactions by modeling molecular structures

- Build recommendation engines that understand user-item-item relationships

The architecture uses PyTorch Geometric for GNN training with graph convolution layers that aggregate neighbor features - your model literally asks its friends what they think before making a prediction.

-- BigQuery Graph: Query your social network as a graph

GRAPH social_network

MATCH (u:User)-[:FRIEND]->(f:User)

WHERE u.user_id = 1

RETURN f.user_id, f.interestsArchitecture 20: Reinforcement Learning & Simulation

RL is where ML gets really sci-fi. Train robot arms to grasp objects. Optimize data center cooling. Teach autonomous vehicles to navigate traffic.

The architecture runs 100 parallel simulations on Compute Engine (using Spot VMs for 60-90% cost savings), collects experiences into a replay buffer, and trains a PPO agent on GPU. The agent goes from 20% success rate to 80%+ through pure trial and error.

💡 Mind-blowing detail: The simulation-to-real-world transfer is one of the hardest problems in RL. The architecture addresses this with domain randomization - training on slightly randomized simulations so the agent learns to generalize.

Inside the Project: What Sets This Library Apart

After dissecting every file, here are the hidden gems that most people would miss:

1. The Industry Mapping Is a Strategy Document

The industries.md file isn't just a lookup table. It's a strategic playbook for 8 industries:

| Industry | Starter Architecture | Why Start Here |

|---|---|---|

| Financial Services | Explainability & Responsible AI | Regulatory requirement (FCRA, SR 11-7) |

| Healthcare | Privacy-Preserving ML | HIPAA compliance is non-negotiable |

| Retail | Feature Store | Foundation for all recommendation systems |

| Manufacturing | Edge Inference | Models must run on the factory floor |

| Technology & SaaS | MLOps CI/CD | Rapid iteration is everything |

⚡ Pro Insight

95% of retail companies use feature stores. 90% of healthcare companies use privacy-preserving ML. If your industry isn't doing this, you're behind.

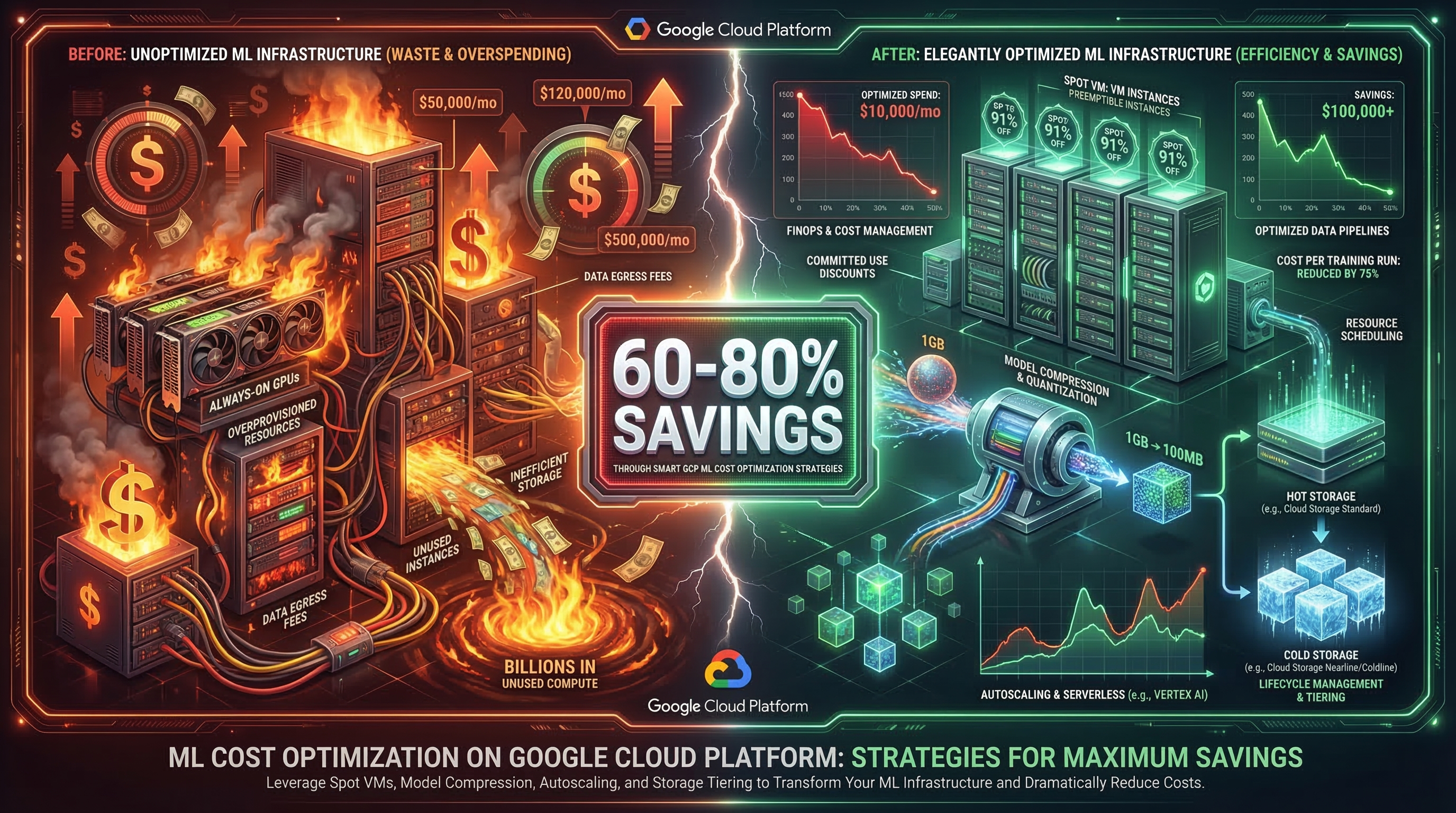

2. The Cost Optimization Architecture Is Pure Gold

Architecture 17 shows you how to cut ML costs by 60-80% with concrete numbers:

- Training: Spot VMs save 70% ($5.00 → $1.50 per 10-hour job)

- Serving: Autoscaling saves 67% ($720/month → $240/month)

- Storage: Lifecycle policies save 63% ($200/month → $74/month for 10TB)

- Model compression: Quantization + pruning + distillation = 10x smaller models

1 GB model → Quantize (250 MB) → Prune (125 MB) → Distill (100 MB)

Latency: 100ms → 20ms | Cost: $500/month → $50/month

Accuracy: 95% → 92% (acceptable for most use cases)

3. Every Architecture Has a "When NOT to Use" Section

This is chef's kiss 👨🍳. Most documentation tells you when to use something. This library also tells you when to walk away:

- Don't use Distributed Training if your model fits on one GPU

- Don't use Federated Learning if you have fewer than 1,000 devices

- Don't use Graph ML if your data has no relationships

- Don't use RL if you have labeled data (just use supervised learning!)

4. Terraform Is Not an Afterthought

Each architecture includes modular Terraform code organized by service:

terraform/05-online-inference-low-latency/

├── main.tf

├── variables.tf

├── outputs.tf

├── bigquery.tf (and more...)

└── sample_app/

└── main.pySecurity-by-default: least privilege IAM, CMEK encryption, VPC Service Controls. Every. Single. Architecture.

The Bigger Picture: Where GCP ML Is Heading

These architectures aren't built in a vacuum. They align with the massive shifts happening in cloud ML right now:

🤖 The Rise of Agentic AI: Google's Agent Development Kit (ADK) and Agent2Agent protocol are enabling AI agents that don't just answer questions - they autonomously plan, execute, and collaborate. These 20 architectures provide the infrastructure backbone that agents will run on.

☁️ Cloud-Native ML is the Default: By 2026, most ML workloads will reside entirely in the cloud. Vertex AI's unified platform - from data pipelines to model registry to deployment endpoints - eliminates the duct-tape integration that plagued earlier ML platforms.

🏗️ AI Hypercomputer: Google's AI Hypercomputer with Ironwood TPUs is redefining what's possible in training speed and cost. Architecture 04 (Distributed Training) is perfectly positioned to leverage this.

🔒 Governance is Non-Negotiable: The EU AI Act, evolving GDPR enforcement, and industry-specific regulations (HIPAA, SOX, FedRAMP) mean that architectures 10, 12, and 15 aren't optional - they're table stakes for enterprise ML.

Key Takeaways

If you remember nothing else from this article, remember these:

- 🏗️ Architecture before algorithms. A great model on bad infrastructure is worse than a decent model on great infrastructure.

- 🔄 Automate everything. CI/CD/CT pipelines, drift detection, feature engineering. If a human is doing it manually, it will break.

- 💰 Cost optimization is a feature. Spot VMs, model compression, and autoscaling can cut your ML bill by 60-80%. There's no excuse for burning money.

- 🔒 Privacy and compliance are not afterthoughts. Federated learning, explainability, and model governance should be designed in from Day 1.

- 📊 Monitor or die. Production models degrade silently. Jensen-Shannon divergence and SHAP-based feature attribution drift are your early warning systems.

- 🧩 Think in building blocks. These 20 architectures are composable. A fraud detection system might combine Feature Store (02) + Online Inference (05) + Graph ML (19) + Drift Detection (11) + Explainability (12). Pick and stack.

- 🚀 Start simple, then evolve. Begin with AutoML (16) and MLOps (09). Add complexity only when you need it.

⚡ The Ultimate Pro Insight

The difference between a data science experiment and a production ML system isn't the model - it's the 22 other things around it: data governance, feature management, deployment strategy, monitoring, explainability, cost optimization, compliance, disaster recovery, and more. This library covers all of them.

Final Thoughts: The ML Architect's Manifesto

We're at an inflection point.

The tooling exists. The architectures are documented. The cloud providers are racing to make ML infrastructure as simple as terraform apply. Google Cloud's Vertex AI platform, combined with services like BigQuery, Dataflow, Pub/Sub, and Confidential Computing, has created an ecosystem where the full ML lifecycle is genuinely manageable.

But here's the thing - tools don't build systems. Engineers who understand architecture build systems.

These 20 architectures are not prescriptions. They're blueprints you can adapt. Swap Cloud Run for GKE. Use Dataproc instead of Dataflow. Choose different storage tiers. The architecture patterns remain the same even as the specific services evolve.

The companies that will win the AI race aren't the ones with the most GPUs or the fanciest models. They're the ones that can reliably, securely, and cost-effectively move models from notebook to production - again and again and again.

The ~86% failure rate is not inevitable. It's a choice.

Choose architecture. Choose automation. Choose production.

And start building. 🚀

If this article helped you think differently about ML infrastructure, Share it with your network. Found a specific architecture interesting? Drop a comment - I'd love to dive deeper into any of them.

The complete GCP ML Architecture Library - including all 20 architectures, Terraform code, industry mappings, and diagrams - is available in the project repository.

📌 Connect & Support

🐙 GitHub Repository: View Source Code + Terraform Templates

📧 Email: connect@jaydeepgohel.com — Let's connect and discuss cloud architecture

☕ Buy Me a Coffee: If you found this work valuable and want to support more content like this, buy me a coffee ☕

This article is with little help of AI

💬 Feedback: Share your thoughts in the comments below — What did you love? What can be improved? Your feedback helps me create better content! 👇

Comments

Post a Comment